Issue tracking substrates to bridge human-agent understandings

Weeknotes 387 - We need tools to support understanding of agents by humans and vice versa. Issue trackers might be a candidate. Next to this triggered thought the latest captured news on physical AI and beyond.

Dear reader,

Last week, I took a few days to focus on art, architecture, and landscapes, after the weeks prior were dedicated to work on the Cities of Things event (see this report in case you missed it). For impressions on an abandoned city, a flashy Antwerp art gallery institute, and another one with a lot of rough edges, find my impressions on Instagram 🙂

Week 387: Issue tracking substrates to bridge human-agent understandings

Next to the first report on the event, I worked on the first drafts of the next phase of civic protocol economies and had some nice chats with potential partners. More on that later if more concrete.

Also, the drafts of the ThingsCon RIOT articles were sent in, great pieces that I am looking forward to diving into more. Did I already mention that we plan to launch on the 26th of June? In Rotterdam, mark your calendars!

This week’s triggered thought

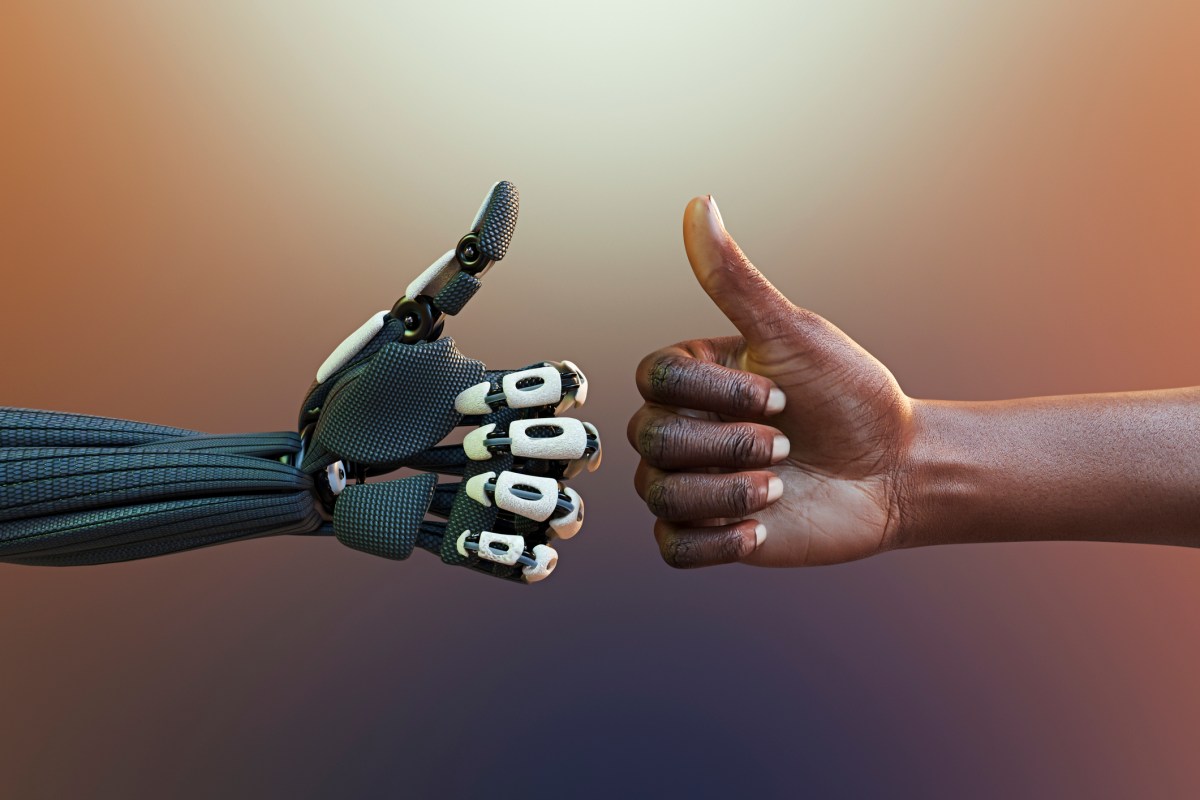

A question came up on a podcast this week: might AI actually make us more human? Not in the sense of replacement anxiety, but in recognizing that co-performance with AI demands more of our human qualities, such as intuition, sense-making, and judgment. We are delegating tasks to machines, but the current wave makes us think: which tasks should we never delegate?

When we hand over writing, crafting, or formulating, how much of our own specialty remains in the output? How do we relate to our AI collaborators, and what happens to our sense of belonging when the collaboration tilts too far toward delegation?

Nate suggested that issue trackers might become more important in the age of AI, not less. The conventional view says conversational AI eliminates the need for all those translation layers; requirements documents, tickets, specifications. AI just understands. Issue trackers, however, create a substrate, a medium through which organizational processes become legible. Every ticket, every handoff, every resolution deposits knowledge about how things actually work, who decides what, where friction lives. This substrate isn't just documentation; it's the shared ground where understanding accumulates.

Both humans and AI agents need this substrate to understand each other. Humans need to see what agents are doing, why, and where human judgment should intervene. Agents need to grasp human intentions, constraints, and preferences. Without this shared legibility, co-performance becomes blind delegation or constant supervision—neither of which scales.

Think of the issue tracker as a tool that values the much-chased ‘human in the loop’. It takes initiative to keep us engaged, to surface moments where human judgment matters, to prevent us from becoming passengers in processes we should be steering. It's a kind of relationship manager, making tangible not just who does what, but how humans and agents can genuinely work together rather than pass each other.

Now what happens when we extend this to physical space. When AI becomes embodied, when it's not just in our screens but in our streets, our buildings, our daily encounters, the orchestration problem multiplies. We'll be living inside AI, in a sense, I mentioned it here before. As our experience of reality will increasingly be mediated by AI-like entities, some human, some not, the future issue tracker might need to track not just tasks but also presence. Whose attention is required here? Whose judgment? Whose humanity?

What are the issue trackers of the real world? Urban planning tools exist, architectural workflows, construction schedules. But those track physical things, not the emerging relationships between humans and embodied AI agents. What substrate captures the small negotiations between a pedestrian and an autonomous vehicle? The adjustments a building's AI makes to its environment, and the human preferences that should constrain it? When an autonomous delivery robot navigates a sidewalk, who tracks that interaction? When a building's AI adjusts its environment, who ensures human preferences remain legible in that system?

With civic protocol economies, we aim to build new communities, new forms of coordination—need this kind of tooling. Not just to manage tasks, but to create the substrate for genuine co-performance. To give humans a role that isn't just residual but intentional. Issue trackers, reimagined, might be one way to get there. Not as bureaucratic overhead, but as guardians of the human part and the shared ground where humans and agents learn to work together.

Notions from last week’s news

Sometimes I lose track of which AI frontier news is allocated to last week. I think the consensus is that, in the ongoing battle of the giants (at least in investments), after the GPT 5.5 release last week, it is (over)taking the lead for now. It shifts more towards coding assistant and security, with new leaps in human-like performances, so it seems. A ramp-up for the enterprise push, chasing each other.

In the meantime, Musk and Altman are meeting in court, on how it all started and pivoted.

Human-AI relations

Agents can also become a burden as they start deleting entire databases. AI self-awareness does not solve the issue.

But is also outperforming doctors in prognoses

Could RSS the goto format for communication between all the small personal apps you will vibe code.

In other words: everyone is an engineer now. And so is the AI.

Are you already being managed by your AI? Not everyone is planning so.

If we lose our limits of short lives and simple communication, we lose also a part of what makes us human. Is this claim.

Back to the future of software engineering

Following a bit on last week's triggered thought on AI in organisations, and linking also to the quest what makes us more human when we work with AI in the real world.

Is AI making us softer? And are soft models making more mistakes?

Physical AI

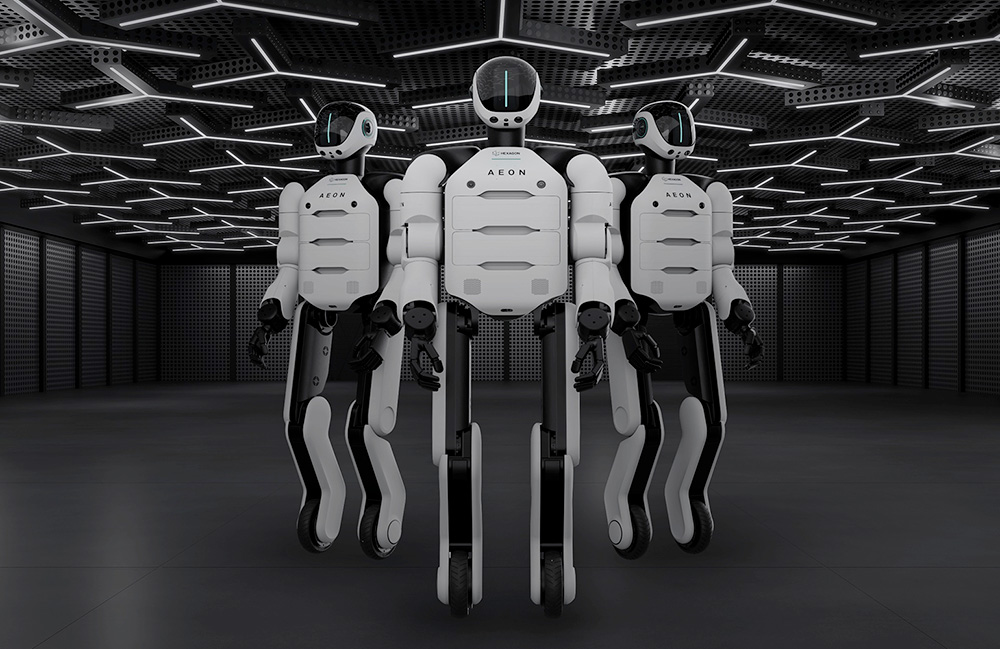

Some embodied AI, and humanoids. Applied (or planned to apply):

Robot meme of the week?

I like this approach; first create the space and context of operating, and the means of interacting with the surroundings, before starting to design and build the vehicle.

Too bad Elon is not making the rules.

A Nike lab

And your car is the current or next wave at the latest to be the AI touchpoint

Meta is entering humanoids; expect revolutionary advertisement models

The promise of intelligence in fitting fashion. Now Google is introducing an implementation.

An interaction of the fluffy companion bot. And another.

AI and beyond in society

The Trojan Horse of Palentir is becoming transparent.

Short-term predictions are the hardest.

The economics of AI: Does it make sense?

Fighting for control of AI. Without a center of gravity.

Fight for the right to define your own AI meaning in your community.

The city as controlled hallucination.

AI swarms that disrupt democracy.

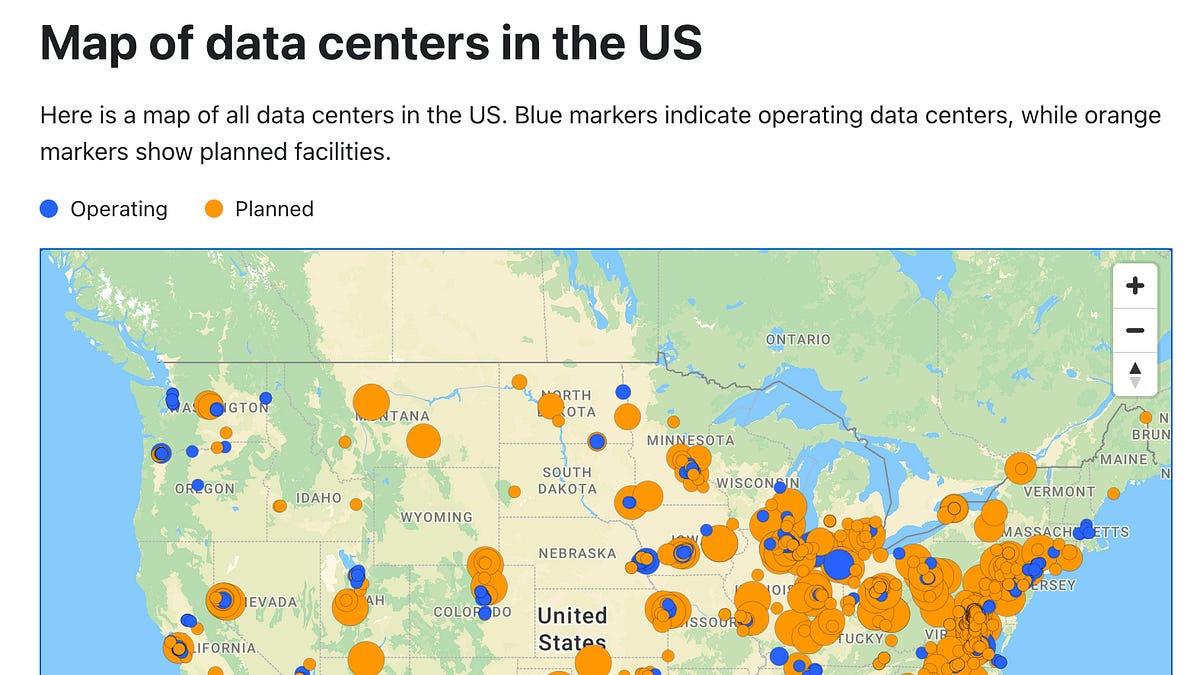

Data center rebellion

Weekly paper to check

Vera was a guest at our event on Cities of Things and shared her research on reflective AI. This is a paper: Reflective AI: A Slow Technology Approach for Design Education

The proliferation of efficiency-focused AI tools in creative processes threatens to undermine critical, reflective practices foundational to design education. This approach can lead to creativity exhaustion and diminished agency among designers and students. As an antidote, we propose Reflective AI: an approach grounded in slow technology principles that reframes AI not as a production tool, but as a medium for reflecting on the creative process itself.

Vera van der Burg, Gijs de Boer, Jesse Josua Benjamin, Brett A. Halperin, Alkim Almila Akdag, Senthil Chandrasegaran, and Peter Lloyd. 2026. Reflective AI: A Slow Technology Approach for Design Education. In Proceedings of the 2026 CHI Conference on Human Factors in Computing Systems (CHI '26). Association for Computing Machinery, New York, NY, USA, Article 89, 1–19. https://doi.org/10.1145/3772318.3791691

What’s up for the coming week?

This week I am happy to be able to join the World Beautiful Business Forum in Athens, as guest of Monique. Looking forward, and will report on my impressions next week for sure!

Other things happening this week, you might like: a DIY sessions of Sensemakers on robotics, The other AI in Rotterdam v2 and Nieuwe Instituut, or Amsterdam UX on responsible AI and the role of design. Or dive into good money with a different take on the digital euro.

Have a great week!

About me

I'm an independent researcher through co-design, curator, and “critical creative”, working on human-AI-things relationships for immersive experiences in physical AI and embodied AI. You can contact me if you'd like to unravel the impact and opportunities through research, co-design, speculative workshops, curate communities, and more.

Currently working on: Cities of Things, ThingsCon, Civic Protocol Economies.