Embody tech to master human intelligences

Weeknotes 384 - Thoughts on repetitiveness as a signal for losing or learning in an intelligence world, new strategies for care. And the latest news captures on physical AI.

Dear reader!

Let’s take a view from a distance… Referencing the Artemis mission. I followed that trip and was awake at splashdown. Watching the communication, it is always a strange mixture of bureaucracy, rituals, and a sense of drama. That is the US feeling that I know from the first time I went to the States back in 95 (crossing the country via old Route 66) and have often experienced since, feels sometimes gone it is shouted over a 79-year-old baby…

Week 384: Embody tech to master human intelligences

First, my weekly update on the State of Cities of Things; the last interview is done, and it was a super nice one that added some extra layers to the directions the themes are already developing. A preview of some of the clustered insights/statements. More next week during the event!

How to connect this to the state of the internet, according to Fieke Jansen, who was invited by Waag Futurelab to present her vision. Fieke was one of the organizers of a great workshop building Mud batteries, envisioning regenerative futures for community AI at ThingsCon last December. The mud batteries inspire a rethink of computing. Something that might be combined with the ideas on mesh-tastic, low-frequency peer-to-peer networks, the city of Amsterdam is experimenting with, among others, around the world. As Fieke states, the future is community. The future is designed for care. We should rethink the economic models.

This week’s triggered thought

Conversations about AI replacing human work tend to focus on the mind. Knowledge work, analysis, creativity—these are the battlegrounds. In earlier newsletters, I discussed the potential value of embodiment. In an article in De Groene Amsterdammer (thanks Inge for pointing), a specific case was made for embracing embodiment as a process.

Others believe a key distinctive capability is “feeling the vibe”. A statement by the founder of Nvidia shared by Wouter van Noort, who opened a discussion on LinkedIn on the value of mind intelligence vs the power of understanding the vibe. The article triggered, as expected, a lot of discussion.

I like to dive a bit deeper into the claims made in the article in De Groene. The article criticizes the contemporary obsession with efficiency and productivity, arguing that generative AI continues a long tradition (from Taylorism and Fordism) of turning people into cogs in a system whose gains flow to capital, not workers. It challenges the idea that AI is a neutral tool we just need to “use well,” and instead highlights built‑in problems of large language models: sycophancy, homogenization, structural “hallucinations”, and a reductive dependence on data that cannot capture lived experience.

One aspect I'd like to explore a bit more: their arguments against optimizing out repetition. Their claim: delegating "simple" tasks to AI undermines how we learn and find meaning.

I feel the argument. But I think it's missing something.

Not all repetition is the same. The factory worker doing the same motion for eight hours is not the parkour jumper drilling the perfect jump. The difference is agency. If you choose the repetition, it serves your own growth; it feeds you. If repetition is applied to you by someone else's optimization logic, it hollows you out.

This connects to embodied intelligence. The intelligence of moving, dancing, and using your limbs to operate something. The knowledge that lives in your hands and spine. Some people have more of this physical intelligence than others. Is there, next to IQ and EQ, already a BQ? All of us have centuries of evolutionary advantage in experiencing reality through a body.

Maybe in decades, robotic creatures and simulations will replicate this too. But for the next decade, we seem to have something distinctive. Returning to Fieke Jansen's regenerative computing, with a rethinking of computing and a valuing of a certain slowness, we might also have embodied (computing) infrastructures.

In exploring the state of living with intelligent, autonomous things in our cities, some interviewees discussed the different relations we have with our infrastructure. What affordances emerge in the entanglements between smart objects, environments, and people? An intelligence that might arise in relationships rather than nodes.

The risk of the current AI moment is creating an assistant layer so smooth, so helpful, that we lose grip on the world itself. As the De Groene article warns, we might end up like Radiohead's pig in a cage on antibiotics: fitter, healthier, more productive, and somehow less present.

I wonder what happens when we shift the lens from mind to body, from functions to relationships, from efficiency to presence.

Notions from last week’s news

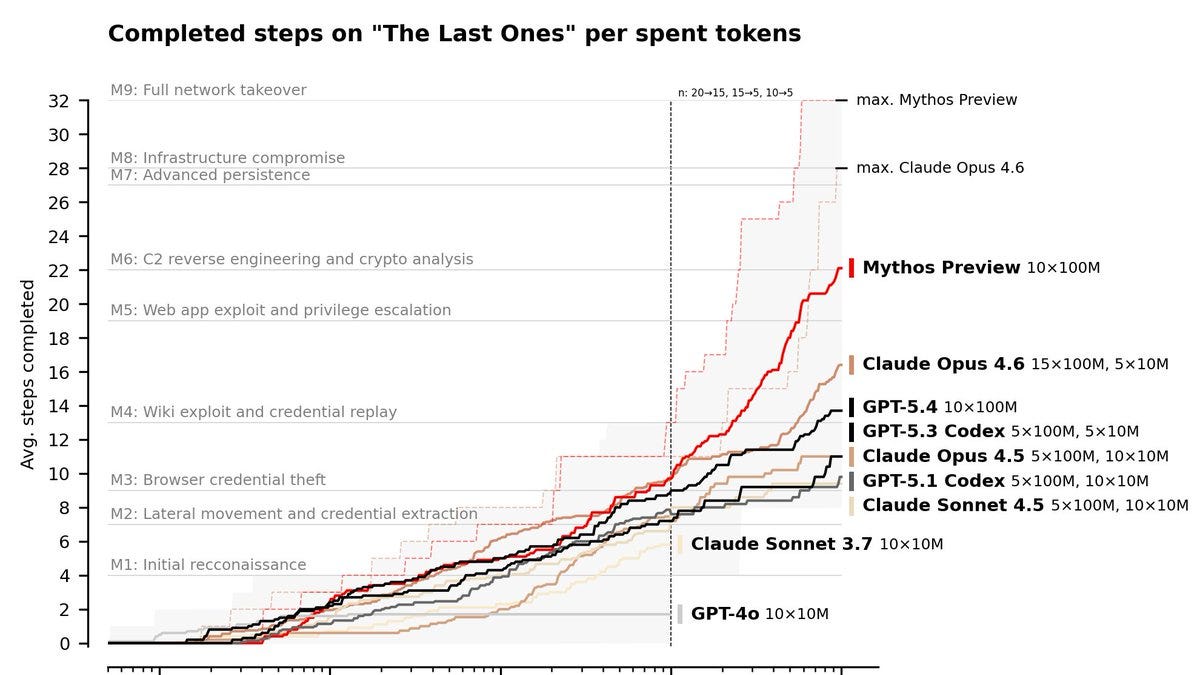

Still a lot to do about the Mythos model of Anthropic. Is it really a game-changer? Or just the internal model that is a couple of months ahead? It might indicate the peak LLM has not been reached. For now the model is definitely not suitable for mass use.

The focus is cybersecurity, at least that is the frame chosen. And it needs to be taken a little seriously for sure. It will have an impact on how we price risk.

Meta is also hinting at a new model: Muse Spark. Will they catch up in the race?

Human-AI relations

Do we need to create HR for the agents that become part of our teams?

Ed Zitron has some thoughts about how weird the AI bubble has become.

This is the new anthropomorphizing: LLMs on the couch of a psychiatrist.

The hidden costs of AI code: lack of insight what the final code will be and reworks might become tricky (and expensive).

That guy…

Design your complete workflows around AI.

In a way, OpenClaw is the delivery of the promise of Copilot

Practice what you preach.

Physical AI

Is making digital music analog for the characteristic of the sound a gimmick of a serious need for grounding? The device is pleasantly weird.

Robots contributing to human based social apps; in a way.

So there will be a few people who can escape from Unitree...

Tech in civic societies

Running AI in closed enclaves as new thing. Apps are emerging…

AI agents, not smartphones, will be the center of our digital life. According to Qualcomm.

A new paradigm for computing is context building for agent work.

Big tech data centers are not per se welcomed by all.

Only to lure in consumers. Open as an advertisement.

Fear or resistance to AI. Or just not liking OpenAI?

New word: tastecore.

Why art is crucial for HCI in the era of art

Weekly paper to check

Science fiction and innovation: A literature analysis on science fiction-related methods mapped into the innovation process

Despite numerous case studies in the literature describing this interface, a major part of methods applied seem to have been chosen in an unstructured, almost random way. This study investigates the literature in search of science fiction-related methods able to support the development of innovations.

Sven Schimpf, Michael Lauster, Jan Oliver Schwarz, Marcus John, Science fiction and innovation: A literature analysis on science fiction-related methods mapped into the innovation process, Futures, Volume 177, 2026, 103770, ISSN 0016-3287, https://doi.org/10.1016/j.futures.2026.103770.

What’s up for the coming week?

This week Thursday is a popular day for conferences: CIIIC event, AI020 conference, UX Rotterdam (also on Friday). Also on Wednesday, Sensemakers has a workshop from LLM to AI agents.

Do I have followers who visit Milan Design Week? An aperitivo by the Things Magazine on Monday.

And don’t forget to RSVP for the State of Cities of Things.

Have a fruitful week!

About me

I'm an independent researcher through co-design, curator, and “critical creative”, working on human-AI-things relationships. You can contact me if you'd like to unravel the impact and opportunities through research, co-design, speculative workshops, curate communities, and more.

Currently working on: Cities of Things, ThingsCon, Civic Protocol Economies.