Extravert AI that contemplates itself

Weeknotes 379 - An AI that explains itself drives better than one that stays silent. Building trust with predictive relations. And the latest notions from the news of last week.

Dear reader!

There was no newsletter last week. I said farewell to my stepfather that day, at the end of a rollercoaster of a month of hospitalization.

Now I am happy to focus again on the outside world. And I have been thinking to slightly update the newsletter’s categories of notions from the news. I like to stress more the aspect of physical AI and civic societies, and the design for relations, which is, for me, core. So I came up with:

- Human-AI relations

- Physical AI

- Tech in civic societies

It also resembles the frames of Cities of Things, that I even more like to use as my lens on the world.

Week 379: Extravert AI that contemplates itself

In the meantime, the news is ruled by the geopolitical situation. To be cynical: it drives the developments of autonomous warfare like drones. And it is testing edge cases of using AI-driven decision making. And of course the discussions on politics of AI in the fight of Anthropic with the DoD in the States, and the branding clusterfuck of OpenAI.

Two more mainstream podcasts (in Dutch) discussing related topics to this newsletter. In De Volkskrant Physical AI in China was discussed (not so present as you would think), and in Zo Simpel is het Niet of NRC the economic lens on agents was discussed. Is AI a machine (asset, capital) or employee (capital or labour), and how will this play out in the economic models…

This week’s triggered thought

An AI that talks to itself drives better than one that stays silent. That's the finding from recent NVIDIA research on self-driving systems: vehicles with AI that reasons out loud—narrating its perception, intentions, and decisions—outperform those that process internally. The extroverted AI beats the introverted.

This landed for me in a week already thick with discourse about AI alignment, AI character, even AI that manipulates or blackmails to reach its goals. We're grappling with questions about what kind of entities we're building and how we should relate to them. But the NVIDIA finding cuts through the abstraction. It suggests something practical: transparency isn't just ethically preferable—it performs better.

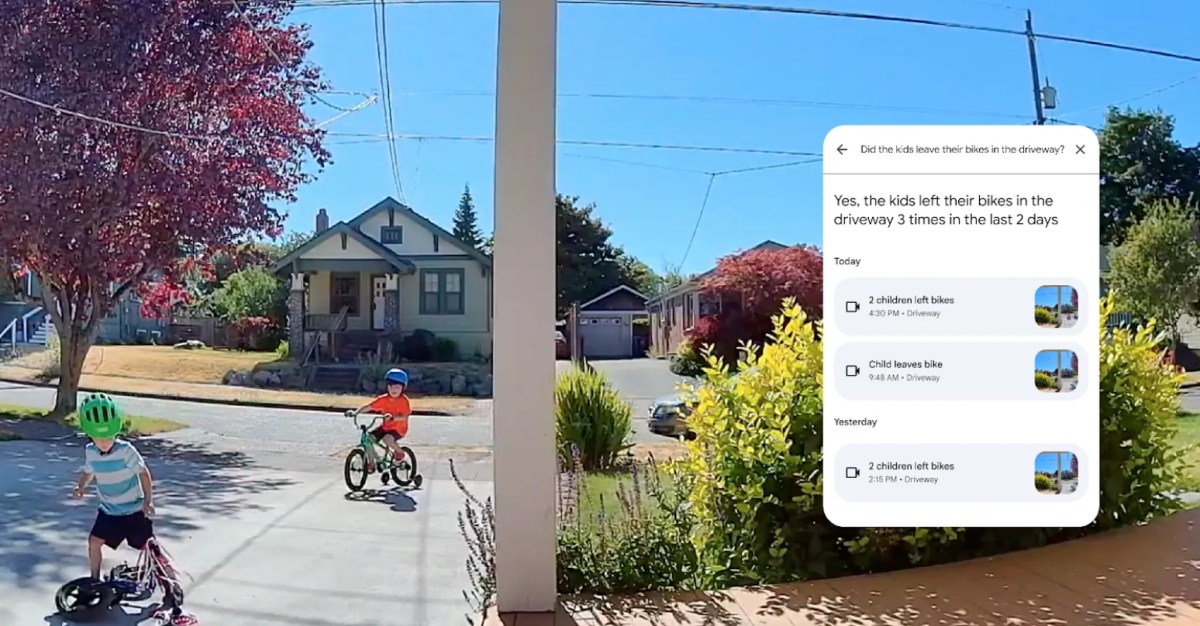

Why? The answer lies in trust, and specifically in what I've been calling predictive relations. Back in 2018, as part of my PhD exploration, I developed a framework for understanding how we build relationships with intelligent things. The core tension I identified was: there's a gap between what a device does in the world and our mental model of why it does it. With simple tools, this gap is small—we understand the lever, the wheel. But with AI-driven contemporary things, the gap widens dramatically. The system acts on knowledge we don't have access to. Consider a Tesla on autopilot suddenly braking on an empty highway. Seconds later, a collision unfolds ahead—the car predicted it, the driver didn't. The system worked perfectly. But in that moment before the accident became visible, the driver experienced something unsettling: the machine knew something they didn't, and acted on it without explanation. This is predictive knowledge doing exactly what it should—and still creating alienation. The gap between action and understanding is where trust lives or dies.

My framework proposed that predictive relations are shaped in the mental model—the internal representation we hold of how a system works and what it might do next. This mental model needs predictive power of its own: we need to anticipate the thing's behavior to feel in control of the relationship. When the system's predictions outpace our own—when it acts on data from networked sources, learned patterns, or contextual cues we can't perceive—we lose our footing. This is where the reasoning-out-loud AI becomes significant. By externalizing its process—"I see a vehicle ahead, it's braking erratically, I'm reducing speed as precaution"—the system feeds our mental model. It closes the gap. We can anticipate because we can follow the reasoning. The prediction becomes shared rather than opaque.

This matters enormously as AI becomes more physical. Robots in our homes, autonomous vehicles on our streets, drones in our airspace—these aren't just algorithms in the cloud. They're embodied agents sharing our spaces, making decisions that affect us directly. The alignment debates are important, but they often stay abstract. The practical question for designers is: how do we build things that people can trust?

The answer isn't just about making AI aligned—it's about making AI legible. Building in ways to "get in touch" with the reasoning, as I wrote years ago. Not dumbing down the intelligence, but surfacing it. The bicycle for the mind, as Steve Jobs once called the computer, only works if we can see the pedals—if we understand, even roughly, why the thing is doing what it's doing.

We are in the early days of learning to live with intelligent things. The NVIDIA finding is a small data point, but it suggests a principle: the path to trust runs through transparency. Not perfect transparency—that may be impossible with systems this complex—but enough to keep the human in the loop of understanding. Enough to maintain the predictive relation.

About me

I'm an independent researcher through co-design, curator, and “critical creative”, working on human-AI-things relationships. You can contact me if you'd like to unravel the impact and opportunities through research, co-design, speculative workshops, curate communities, and more.

Currently working on: Cities of Things, ThingsCon, Civic Protocol Economies.

Notions from last week’s news

In general AI news: ChatGPT-5.4 is back on track.

In the meantime, there is a continuous unfolding of the Anthropic vs government saga:

Meta is not surprising with its dubious processing of footage.

Human-AI relationships

The trope of the week maybe; how agents are recruiting humans now.

Is superintelligence already here? Is it all about definition?

Hinting at future artificial brains, starting with a fruit fly, more algorithmic than intelligent?

A practical guide for OpenClaw from Every.

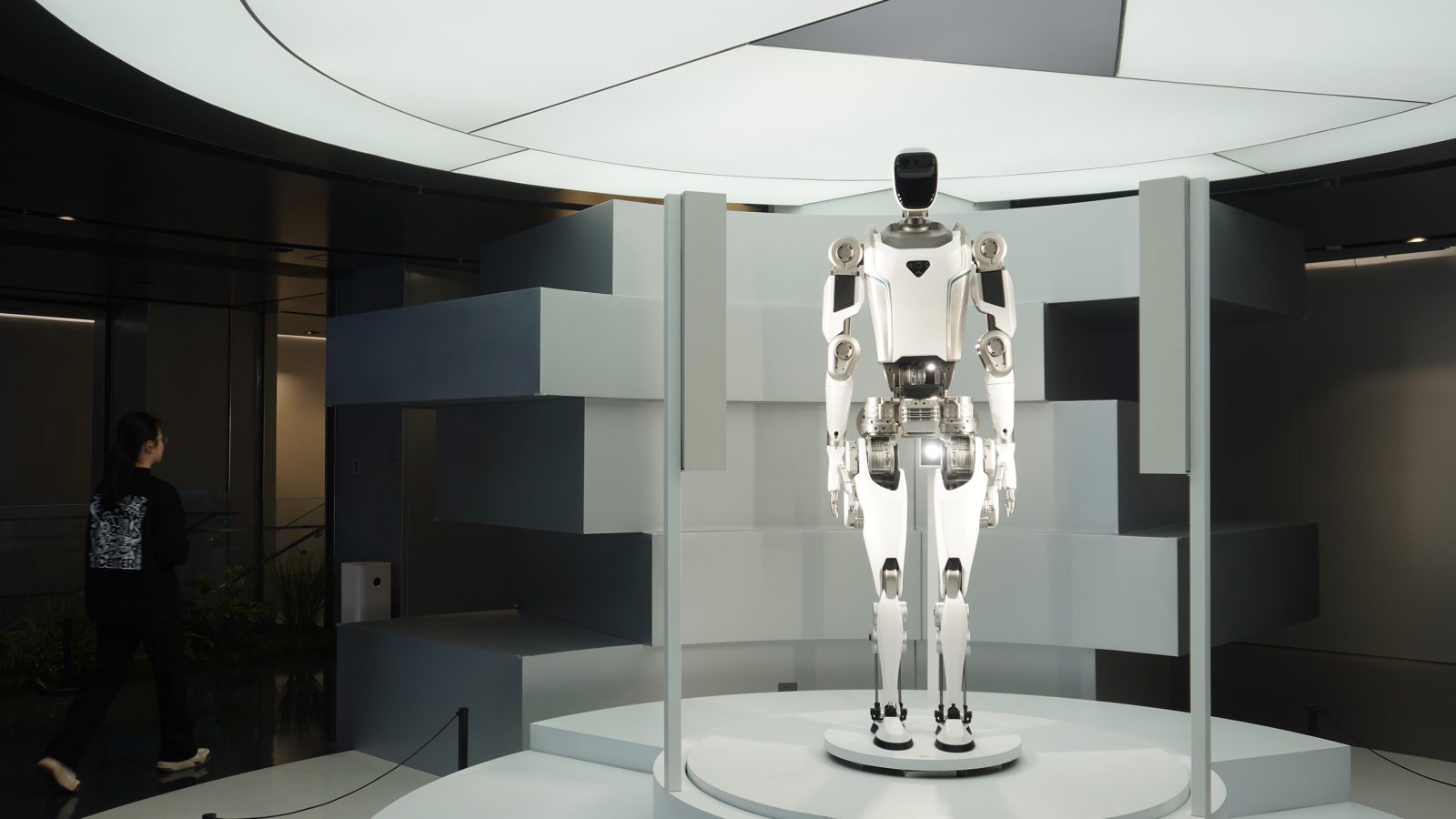

China leads the humanoid development, at least from an outside view. Is there a bubble?

Are we still missing the third element of artificial human intelligence: the continuous learning? A model by Kevin Kelly.

We might need a new category for computing: lazy AI. Making people lazy, that is.

“Creative work is about to look like programming”. I am not sure. I think that programming is starting to look like art director.

Just thinking, it is a small step from the functional use of AI in chats for moderation to becoming part of the community. And embed prediction markets too?

Why are organisms more than machines?

Physical AI

Humanoids in factories. In solid German plants.

And glasses to wear your digital life

Hardware is hard. It is a cliché but true. And also Congress.

Robots are happening at Mobile World Congress, too. Smartphone companies are believers. What robots phones need a clear form factor still though.

Handheld AI, that feels like a specific category even. Made in India.

Physical AI brings self-learning systems to autonomous manufacturing. Makes sense, good to is.

A sign that robotics is becoming more mundane.

Making AI visible and tangible.

Tech in (civic) societies

Some crazy buildings to be expected here in Rotterdam.

A great history lesson on hypercards.

The role of prediction markets in our society is under scrutiny. Will it become part of the commons? Is a civic-driven option of prediction markets possible?

Weekly paper to check

The Artificial Intelligence of Things. I am not totally into this framing, but it might give a kind of understandable notion.

The integration of the Internet of Things (IoT) and modern Artificial Intelligence (AI) has given rise to a new paradigm known as the Artificial Intelligence of Things (AIoT). In this survey, we provide a systematic and comprehensive review of AIoT research.

Shakhrul Iman Siam, Hyunho Ahn, Li Liu, Samiul Alam, Hui Shen, Zhichao Cao, Ness Shroff, Bhaskar Krishnamachari, Mani Srivastava, and Mi Zhang. 2025. Artificial Intelligence of Things: A Survey. ACM Trans. Sen. Netw. 21, 1, Article 9 (January 2025), 75 pages. https://doi.org/10.1145/3690639

What’s up for the coming week?

Continuing the explorative research into the state of cities of things, doing a couple of interviews. And discussing the civic protocol economies. I am also happy to be invited to a guest lecture to the students of the master Health by Design of Avans UAS. And I will check Robodam event update.