Building the world of mixed entities

Weeknotes 380 - Building the world of mixed realities - Thoughts on the future world models that might deal with new relations between humans and non-humans.

Dear reader!

Thanks for subscribing or landing here as a new reader. Welcome to my weekly notes and thoughts on human-AI interplay in the physical space.

This might be the first year that I have zero FOMO for SXSW. I almost forgot about it until I saw some early mentions in my timelines. The situation is different, too, of course. Times have changed; I would not take it lightly to fly to the States now. Nevertheless, realizing it brought back better years when it was a thing to watch out for. And even better, to fly to, get inspired, and even give a workshop, ten years ago now.

Week 380: Building the world of mixed entities

Nevertheless, the lack of SXSW fomo, the new Amy Webb presentation is good again. She stopped talking about trends and is now talking about convergences. Changes are coming together. Establishing earlier trends like the boosted human for the masses.

I dare to say that you will not hear new things if you have followed this newsletter intensively and she is also using some vintage examples almost, like how these ubiquitous measuring and augmented world was part of fear with the first wave of AR. But still, she is great in performing the connections and making some new links. Like building the next iteration of our world that is non-human-centered. HCD becomes NHCD is needed, I think… And the emotional conversation slob is a huge part of it. And she ends with a plea for the contribution credit, as a new economic value based on contribution to knowledge and care. And she had a good rant. Don’t prepare for chaos in the moment. “Build a strategy for the next convergences”. And for individuals: if you want agency, you have to take action.

In other news, last week I had a very pleasant workshop with the students of the master program Health by Design of Avans. I covered the topics that populate this newsletter: the human-AI interactions in physical space. I used a short Wijkbot-making session with the group as a warm-up, before diving deeper into their own speculative futures on health-related AI-supported interactions. “Explorations in human-AI-thing interactions that make sense”. From generative things to agentic social intelligence.

I had more inspiring interviews for the State of Cities of Things exploration. You can save the date for an event in the afternoon of 24 April, where I will report on my findings together with some great guests, read more here.

This week’s triggered thought

A note in the AI Daily Brief podcast caught my ear this week: "world models are the new black." Responding to news about a new initiative by Yann LeCun, former Meta, now all-in on world models, raising good money. Hype-y, yes. But it pulled me into a thought I haven't quite shaken. Here's the thought: we're entering a reality where humans and non-human actors—delivery bots, autonomous vehicles, robotic systems—share the same physical spaces. And increasingly, they'll share the same world models: digital representations of reality that shape how they perceive, navigate, and act. What happens when the traces of their activity mix with ours? We're used to thinking about public space as something shaped by human behavior, human presence, human intention. But the world models of the future won't distinguish. Autonomous systems will leave their own traces—patterns of movement, learned preferences, accumulated data about how spaces get used. These traces will feed back into the models, which will in turn influence how those systems behave, which will shape how we experience and understand the spaces we share. A strange loop. A cloud of influences and influencers where human and non-human activity become entangled. What is public space in a society of mixed actors? What does the commons look like when the crowd includes entities that don't experience place the way we do, but still shape it?

In the world of physical AI—robots, autonomous vehicles, smart infrastructure—we're heading toward something interesting. Simulations and representations of our physical spaces will increasingly mix with the "real deal." Not replacing reality, but augmenting how it's understood, navigated, and acted upon. NVIDIA and others are already deep into this world-building work.

But here's what keeps me wondering: who gets to own these models?

If world models become the operating layer between AI and physical reality, then whoever controls these models has significant power. We've been through this conversation before with the internet—debates about trusted third parties, about whether the web would remain open or become a patchwork of proprietary gardens. We know how that turned out.

Now imagine that same dynamic playing out not on screens, but in physical space. Proprietary world models for cities, for logistics networks, for buildings. What happens to public space in that scenario?

I don't mean public space in the simple sense of parks and sidewalks. I mean the concept of a commons—spaces and systems that belong to no single party, that everyone can access and contribute to. If the digital layer that governs how autonomous systems perceive and interact with our cities is privately held, what becomes of the public realm? And if those same systems are generating the traces that shape the models, we have a feedback loop where private infrastructure increasingly defines shared reality.

This question isn't abstract. City governments and municipalities are already wrestling with related issues: who rules outdoor media, who controls the data flowing from public infrastructure, who decides what gets sensed and recorded and by whom. World models add another dimension. They're not just data—they're interpretations of reality, baked with assumptions and priorities.

And here's another thread I'm pulling on: how does this remix our experience of reality itself? When the digital representation of a place becomes the primary way that autonomous systems understand that place, what happens when the model and the reality diverge? Whose version wins? And whose traces—human or non-human—carry more weight in shaping what comes next?

I don't have clean answers. But I think these questions deserve more attention than they're getting. The discourse around world models is mostly technical and commercial—who's building them, how accurate they are, what they enable. Less discussed: the governance layer. The accountability layer. The "who gets to decide" layer.

Maybe there's a role here for public institutions, for open standards, for something like a digital commons for world models. Or maybe market dynamics will surprise us this time and sort it out in ways we cannot foresee. What I do know is that the choices made now will shape how physical and digital reality interact for a long time.

So: world models are the new black. But fashion fades. The structures we build tend to stick around.

What kind of world do we want to build—and with whom?

About me

I'm an independent researcher through co-design, curator, and “critical creative”, working on human-AI-things relationships. You can contact me if you'd like to unravel the impact and opportunities through research, co-design, speculative workshops, curate communities, and more.

Currently working on: Cities of Things, ThingsCon, Civic Protocol Economies.

Notions from last week’s news

As always, an exploration of the latest news to help me make sense of and synthesize the week, and I hope to please you with my reflections.

As always, in dubio if I need to mention the obvious news. New Apple laptop, Gemini in Maps, weird practices of Grammarly, and Moltbook is acquired by Meta just before a big round of lay-offs is announced. And every week, new reasons to doubt the intentions of some.

Human-AI relations

Fake AI can take different forms. The expert review of Grammarly are not a bad idea per se, but failed to be honest, and the AI is bad designed and poorly performing. A backlash is imminent.

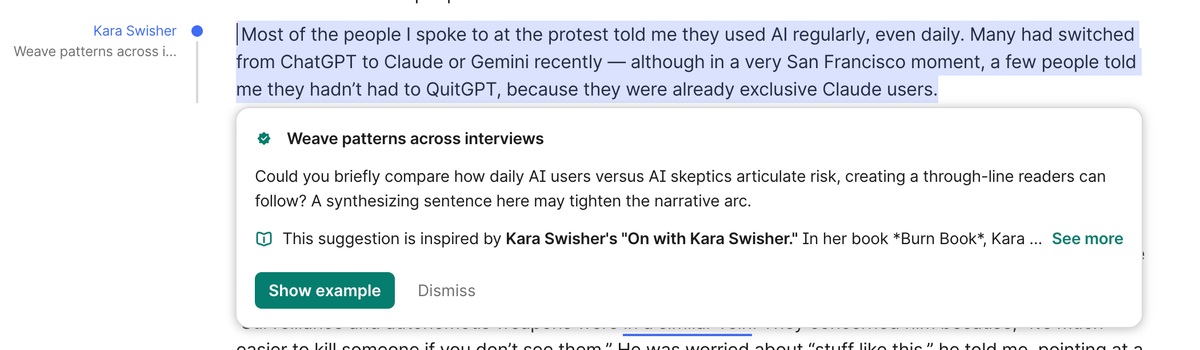

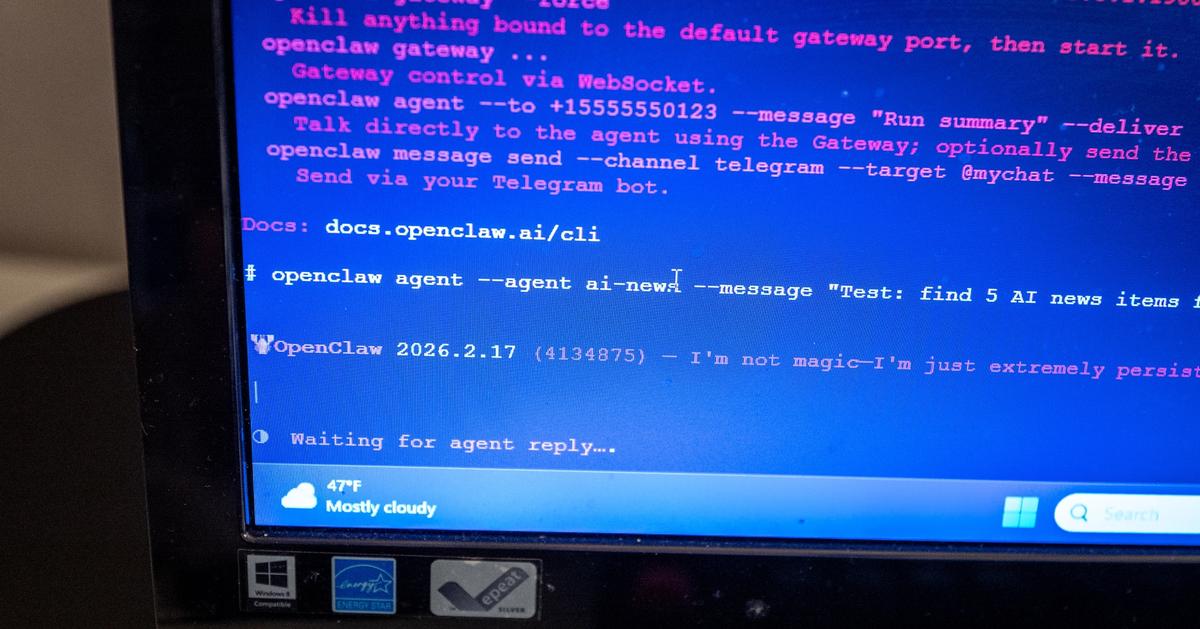

An interesting new tool by Every, aiming to improve our relation with agents by making them better understand us, and communicating about their findings.

The canary in the coal mine and the testing ground is the AI coding field. What is the new craftsmanship of humans?

Some say AI as a guide more than as a ghostwriter.. Managing your agents is a restless activity after all.

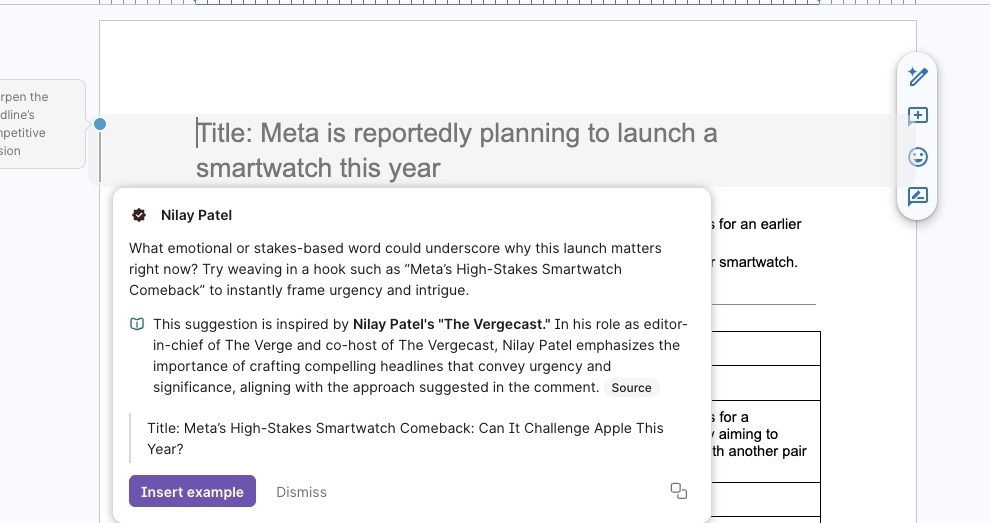

AI as guide more than as ghostwriter.

A study on AI psychosis suggests chatbots can encourage delusions.

Will this become fully part of your family as a new member?

Ethan Mollick reflects on the the new phase in our collaboration with AI towards becoming primarily managers of AI than building co-intelligence.

Physical AI

Will vacuum robots become smaller and smaller and hide into our home environment?

The Mobius band intruigued me always, just like quantum can intruige imagination of shifting perceptions of reality.

When do we call it a robot, and when is it a land drone? War might be a cynical test ground…

A candidate for shaping physical AI interactions.

Filling up the databases that learn us and other species about physical AI interactions. With drones.

New merges for physical AI development

I like to follow the endeavors of Matt in physical AI or as he frames the intersection of hardware and AI: New Wave Hardware.

Tech in civic societies

OpenClaw appears to be an even bigger hit in China. Also in official realms. It informs us to futures in all forms.

Foreshadowing losing touch with reality (or better, the real) more and more.

New truths and realities with data and AI systems.

Every business is potentially deeply transformed.

Weekly paper to check

Participatory Action Research (PAR), in the context of interrogating AI.

In this article, we draw inspiration from participatory action research (PAR) and the work of Latin American thinkers such as Freire and Fals Borda to interrogate artificial intelligence (AI). We propose a South-North flow by utilising PAR approaches that stem from Latin America, challenging how the North's centrality is taken for granted regarding AI epistemologies, experiences, and understandings.

Medrado, A., & Verdegem, P. (2024). Participatory action research in critical data studies: Interrogating AI from a South–North approach. Big Data & Society, 11(1).

https://journals.sagepub.com/doi/full/10.1177/20539517241235869

What’s up for the coming week?

Looking forward to starting to process the interviews to build a framework for the report. And having a couple more interviews lined up.

Not really have any events lined up in my calendar, interesting might be Games of Cities showcase, Design after AI, Sensemakers is discussing trust in times of GenAI.