A new token for the cultural meme of thinking out loud

Thinking about how a cultural meme shaped by a device might evolve into a new form of 'minding' habits leveraging social intelligence.

Dear reader!

Yes, you are right, this newsletter is one day later than usual. I ‘predicted’ this last week: Easter Monday was too busy with other stuff to have enough time to finish the newsletter. For the record: I capped the news until Monday.

Week 383: A new token for the cultural meme of thinking out loud

It is hard to ignore geopolitics, with another week of devastating developments. While writing this, the news drops that there might be a ceasefire deal. Is this like that movie meme of two cars rushing into each other to test who is the latest to chicken out? We will see how it will be spun this time; Iran is claiming victory, which does not feel like a durable standoff…

But let’s shift towards the core topic of this newsletter: curiosity about all developments in human-AI-things, physical AI, urban robotics, and the impact of other intelligences on our society. In the triggered thought, I have a signature triggered thought that I elaborated through some interactions with the mind mirror of AI tools.

Looking back at last week, I was blessed to have six more interviews for the State of Cities of Things project. Only two left now. I can go on forever. It is very rewarding, but I also need to process the insights, and I have set a deadline to share and discuss the first results on 24 April. In case you missed it: check it out and join our event!

Next to that, we are planning for the ThingsCon RIOT and Salon at the end of June (26th in Rotterdam).

This week’s triggered thought

DJI announced a new mini mic this week. On its own, just another iteration of a pocket-sized wireless recorder. But this device made me think about something bigger.

Over the past year, the DJI mic has become more than functional gear for videomakers. It has become a cultural symbol. You see it clipped to the shirts of a new generation of content creators on TikTok and Instagram—people doing real-life podcasting, interviewing strangers on the street, becoming everyday reporters of urban life. The little square box signals: I am here to capture this moment with you.

What happens when that box gets an AI inside?

Not for transcription. For participation. A companion that doesn't just record the conversation but has been building a relationship with you over weeks. That knows what you've been thinking about, what you've been curious about, and can bring that into the moment—whether you're talking to a stranger or walking alone with your own thoughts.

I'm dictating this column right now—at least this first rough version. Speaking helps me sketch ideas, capture the initial thinking, reflect in the moment. It works better than typing when I'm still figuring out what I actually think. There's already a feedback loop happening: I speak, see my words appear, react to them, speak again. Later, I'll flesh it out on paper, but this early phase is where dictation shines.

At the start of this year, I wrote about social intelligence as a driver for 2026—the shift from designing for functions to designing for relations. These wearable AI devices might be where that shift gets tested first. Not just context-aware in the technical sense (location, time, activity) but socially context-aware. Understanding the texture of a conversation, the history of a relationship, the mood of a moment.

The tools we have now—Plaud, Limitless, Otter, even iPhone dictation—are optimized for capturing words. What would a device look like that's optimized for capturing you in relation? Will it be the long-rumored OpenAI device? A future AirPods iteration that's always listening, always learning? Or will it emerge from the cultural space the DJI mic already occupies—the street podcasters who've already normalized wearing their conversations?

Whatever the device might look like, the new state of internal monologue out in the open feels a new iteration in our social relationship with intelligent things.

Notions from last week’s news

Maybe nice to mention, this week I captured around 50 articles through my RSS feeds in Readwise Reader, and another 30ish via other channels. That is the longlist. The biggest news event might be Anthropic’s code and model leaks (feature foreshadowing mainly). And Apple turnt 50 with all the accompanying nostalgia. And as always, Sam Altman manages to draw attention to himself and OpenAI. With a puzzling acquisition ao. Perplexity is back in showing bad behavior.

The week also included 1 April, so I hope I did not have any fake story captured. With all the fake emails and other synthetic media slop, 1 April is both eroding importance, and more important than ever for analog fake experiences.

Human-AI relations

First-person user research on wearable AI.

How will we change our way of working through our relation with our witty helpers?

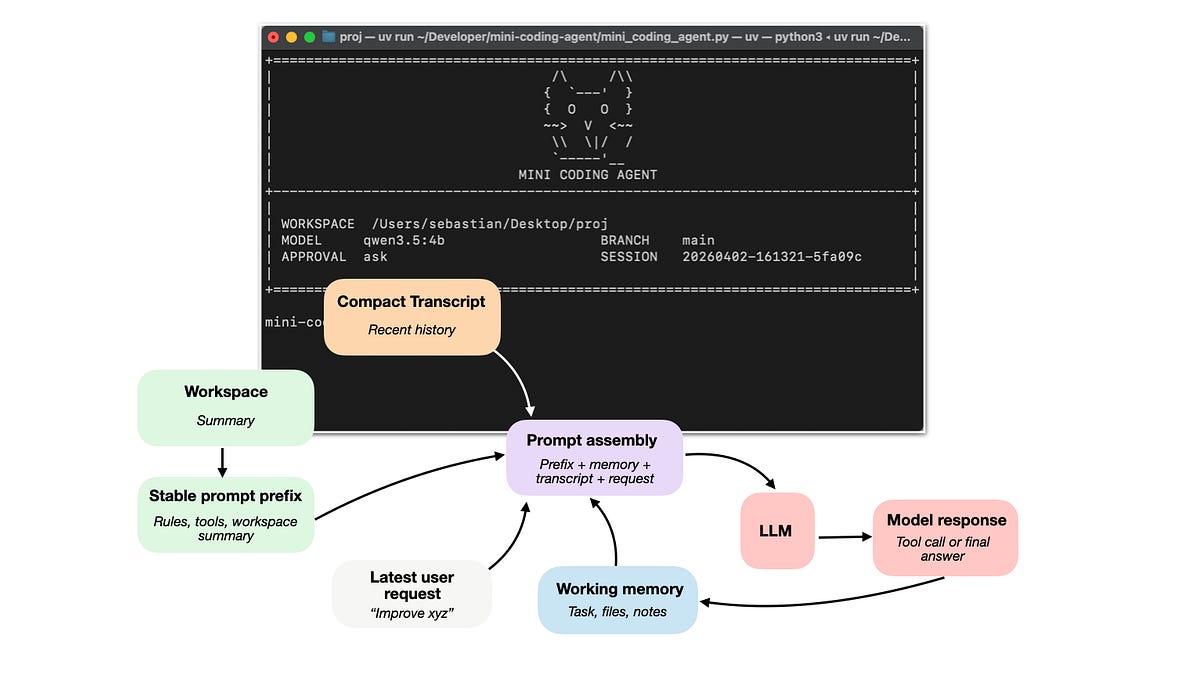

The overall design of coding agents’ components laid out.

Do we become more skilled operators than independent thinkers? And the cognitive costs of AI.

Real writing with AI. Still challenges.

Designing for human-agent interaction focuses on creating better interfaces.

Six layers of AI agents.

On agent ecologies. “Articulating agent ecologies with high-personality planetary computation”

Physical AI

I did not try this yet, but might do soon if possible. Will this AI companion now be the favorite commuter buddy?

A new form of blue screen influences traffic infarcts.

More AI glasses are expected. Will they match my headphones?

US Humanoids have Chinese insides.

Networks of support for building robotics.

Models for Physical AI might become like LLMs?

Tech in civic societies

Some tech policy and law reflections on the closing of Sora.

Resilience in the age of AI

Historic rulings on Meta and Google harming users due to their platform design.

Weekly reflection on AI bubbling.

Learnings from Japan on robot labor canabilizing

China will also regulate children's digital lives.

The craftocene: Superflux reflecting on the future of humanity

Weekly paper to check

Diving deep into cognition and AI.

Across studies, participants with higher trust in AI and lower need for cognition and fluid intelligence showed greater surrender to System 3. Tri-System Theory thus characterizes a triadic cognitive ecology, revealing how System 3 reframes human reasoning and may reshape autonomy and accountability in the age of AI.

Shaw, Steven D and Nave, Gideon, Thinking—Fast, Slow, and Artificial: How AI is Reshaping Human Reasoning and the Rise of Cognitive Surrender (January 11, 2026). https://doi.org/10.31234/osf.io/yk25n_v1

What’s up for the coming week?

Next to the writing task, I have planned to check the State of Internet session with Fieke Jansen on critical infrastructures. And the event in the AI House From Models to Machines seems relevant. This one does not fit in my schedule, but might be nice: Vibe Coding with the Internet Archive. Also, check the events for IoT Day.

See you next week

About me

I'm an independent researcher through co-design, curator, and “critical creative”, working on human-AI-things relationships. You can contact me if you'd like to unravel the impact and opportunities through research, co-design, speculative workshops, curate communities, and more.

Currently working on: Cities of Things, ThingsCon, Civic Protocol Economies.